Hi, ich versuche einen Kursverlauf vorherzusagen... Die Vorhersage des Kurs springt jedoch "mit einem Peak" nach oben. Weiß jemand wieso?

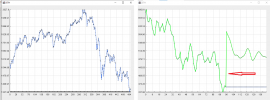

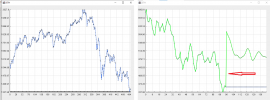

Hier ist "der Knick" zu sehen:

Hier ist "der Knick" zu sehen:

Java:

private void predict(List<Double> tprices1, String symbol) {

System.out.println("Create data");

final int n1 = tprices1.size(); // input data length, 168 hours, one week

final int n2 = 64; // batch length

final int n3 = 24; // sample length, 24 hours, one day

final int n4 = 48; // prediction length, 48 hours, two days

double min1 = Double.MAX_VALUE;

double max1 = Double.MIN_VALUE;

for (Double double1 : tprices1) {

if (double1 < min1) {

min1 = double1;

}

if (double1 > max1) {

max1 = double1;

}

}

for (int i = 0; i < n4; i++) {

tprices1.add(tprices1.get(n1 - 1));

}

List<DataSet> dataSets0 = new ArrayList<>();

for (int k = 0; k < n1 + n4 - n2 - n3 - 1; k++) {

INDArray input = Nd4j.create(new int[] { n2, 1, n3 }, 'f');

INDArray label = Nd4j.create(new int[] { n2, 1, n3 }, 'f');

for (int i = 0; i < n2; i++) {

for (int j = 0; j < n3; j++) {

input.putScalar(new int[] { i, 0, j }, (tprices1.get(k + i + j) - min1) / (max1 - min1));

label.putScalar(new int[] { i, 0, j }, (tprices1.get(k + i + j + 1) - min1) / (max1 - min1));

}

}

dataSets0.add(new DataSet(input, label));

}

List<DataSet> dataSets1 = dataSets0.subList(0, n1 - n2 - n3);

List<DataSet> dataSets2 = dataSets0.subList(n1 - n2 - n3 - 1, dataSets0.size());

System.out.println(dataSets1.size());

System.out.println(dataSets2.size());

System.out.println("Build lstm networks");

final int nIn = 1;

final int nOut = 1;

final int seed = 345;

final int iterations = 1;

final double learningRate = 0.05;

final int lstmLayer1Size = 256;

final int lstmLayer2Size = 256;

final int denseLayerSize = 32;

final double dropoutRatio = 0.2;

final int truncatedBPTTLength = n3;

MultiLayerConfiguration conf = new NeuralNetConfiguration.Builder().seed(seed).iterations(iterations).learningRate(learningRate).optimizationAlgo(OptimizationAlgorithm.STOCHASTIC_GRADIENT_DESCENT).weightInit(WeightInit.XAVIER)

.updater(Updater.RMSPROP).regularization(true).l2(1e-4).list().layer(0, new GravesLSTM.Builder().nIn(nIn).nOut(lstmLayer1Size).activation(Activation.TANH).gateActivationFunction(Activation.HARDSIGMOID).dropOut(dropoutRatio).build())

.layer(1, new GravesLSTM.Builder().nIn(lstmLayer1Size).nOut(lstmLayer2Size).activation(Activation.TANH).gateActivationFunction(Activation.HARDSIGMOID).dropOut(dropoutRatio).build())

.layer(2, new DenseLayer.Builder().nIn(lstmLayer2Size).nOut(denseLayerSize).activation(Activation.RELU).build())

.layer(3, new RnnOutputLayer.Builder().nIn(denseLayerSize).nOut(nOut).activation(Activation.IDENTITY).lossFunction(LossFunctions.LossFunction.MSE).build()).backpropType(BackpropType.TruncatedBPTT)

.tBPTTForwardLength(truncatedBPTTLength).tBPTTBackwardLength(truncatedBPTTLength).pretrain(false).backprop(true).build();

MultiLayerNetwork net = new MultiLayerNetwork(conf);

net.init();

net.setListeners(new ScoreIterationListener(10));

System.out.println("train...");

for (int epoch = 0; epoch < 1; epoch++) { // only one epoch

System.out.println("Epoch: " + epoch);

for (int i = 0; i < dataSets1.size(); i++) { // skip the first 0 data

net.fit(dataSets1.get(i)); // fit model using mini-batch data

}

net.rnnClearPreviousState(); // clear previous state

}

System.out.println("predict...");

double[] predicts = new double[n4];

for (int i = 0; i < n4; i++) {

predicts[i] = net.rnnTimeStep(dataSets2.get(i).getFeatureMatrix()).getDouble(n3 - 1) * (max1 - min1) + min1;

}

System.out.println("Show prediction");

List<Double> toShow1 = tprices1.subList(n1 - n4 * 2, n1 + n4);

List<Double> toShow2 = new ArrayList<>();

for (int i = 0; i < n4 * 2; i++) {

toShow2.add(toShow1.get(i));

}

for (int i = 0; i < n4; i++) {

toShow2.add(predicts[i]);

}

JFrame gp = new JFrame(symbol);

gp.add(new GraphPanel(toShow1, toShow2));

gp.setSize(800, 600);

gp.setDefaultCloseOperation(WindowConstants.DISPOSE_ON_CLOSE);

gp.setVisible(true);

}